Kroll, Inc. approached DevsData LLC with a complex data challenge to extract and process information from multiple websites that were designed to resist automated collection. These sources included financial databases, eCommerce listings, real estate platforms, and unstructured media content. The client required a set of intelligent scrapers that could gather vast amounts of structured and unstructured data in real time, while maintaining accuracy, confidentiality, and operational efficiency.

The project’s goal was not only to automate manual research tasks but also to supply Kroll’s internal analytics and risk intelligence platforms with continuously updated datasets used in market monitoring, compliance reviews, transaction analysis, and investigative research. DevsData LLC was selected for its proven record in developing resilient scraping systems capable of handling high-volume, protected sources. The collaboration brought together Kroll’s domain expertise and DevsData LLC’s advanced engineering skills to deliver a scalable, high-performance data extraction framework.

Kroll is a global leader in risk and financial advisory solutions, known for nearly a century of trusted expertise in governance, valuation, corporate research, and investigative analysis. With a team of over 6500 professionals across continents, the company uses analytical methods and technical systems to help clients navigate complex business environments and make informed decisions.

Kroll’s work spans risk management, compliance, transaction advisory, and strategic valuation. The firm supports clients during mergers, restructurings, disputes, and regulatory reviews by providing financial analysis, valuation reports, investigative research, and compliance assessments. These services help organizations identify financial, regulatory, and operational risks early in planning and transaction stages rather than after decisions are already made. Guided by values of integrity and accountability, Kroll has built long-term partnerships with leading organizations worldwide, providing them with the clarity and insight necessary to maintain a competitive advantage.

Do you have IT outsourcing needs?

The collaboration covered the full design and implementation of multiple large-scale web scrapers tailored to Kroll’s operational needs.

DevsData LLC’s task was to gather data from numerous high-security, structurally complex websites across various industries, process it into a structured format, and deliver it to Kroll’s analytical systems.

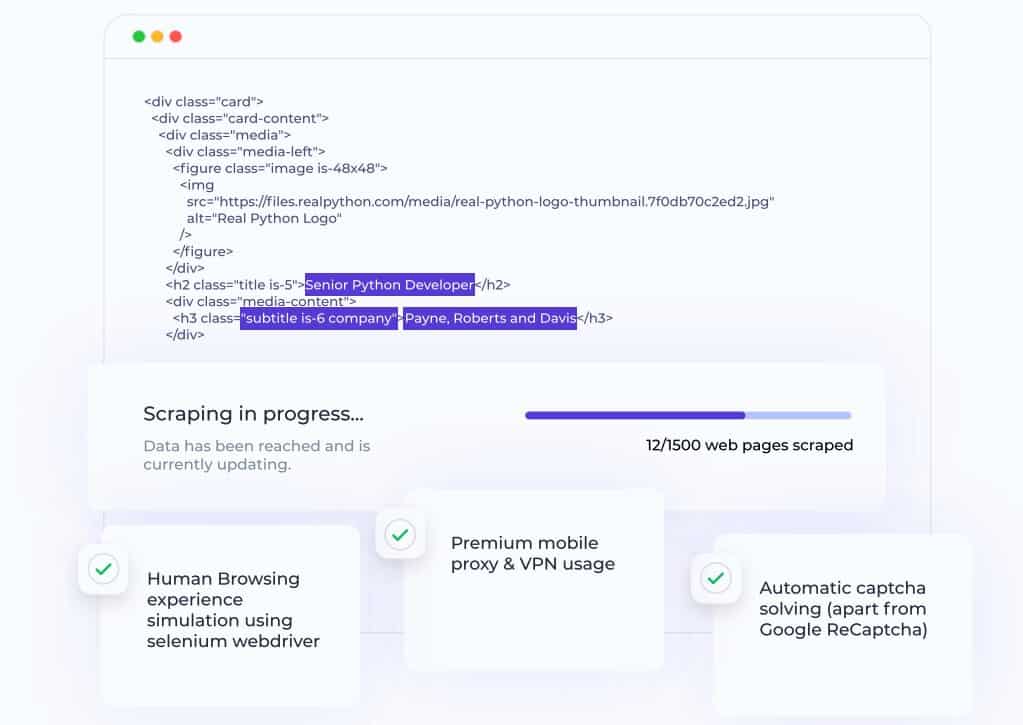

This included the extraction of financial data, product pricing, and real estate information, as well as market and sentiment insights from media and social platforms, covering more than 30 protected websites and thousands of individual pages updated on a continuous basis. The project also required managing proxy rotation, captcha handling, and traffic throttling to regulate request rates and operate within site-specific technical limits. In addition, DevsData LLC implemented automation pipelines that transformed unstructured data into usable formats compatible with Kroll’s internal data warehouse.

The systems were designed to operate continuously, allowing Kroll to access refreshed datasets without manual intervention. Regular monitoring, performance tracking, and error-handling mechanisms were integrated to guarantee the reliability of the entire infrastructure.

The main technical obstacle was the nature of the target websites themselves. Many had complex, dynamically rendered frontends, making data retrieval difficult without simulating user interactions. Others implemented strong anti-bot systems, captchas, and IP blacklisting mechanisms that prevented traditional scraping approaches from functioning effectively.

Another challenge involved assembling a team with the right engineering expertise. This type of project required specialists fluent in Python, Selenium, Scrapy, BeautifulSoup, low-level HTTP requests, proxy rotation, and cloud-based deployment. Developers with this combination of skills are difficult to find, and the project needed professionals experienced in both large-scale scraping and secure data handling.

In parallel with these technical constraints, the project involved client-facing and operational challenges. Kroll’s internal stakeholders relied on the extracted data for live analytical workflows, which required frequent scope adjustments, priority shifts, and validation cycles as new sources were introduced. This created a need for continuous coordination between engineering teams and data analysts, detailed reporting on scraper performance, and rapid turnaround on data quality issues. The delivery process had to remain predictable and transparent while operating under strict confidentiality requirements and evolving compliance controls.

In addition to these challenges, the client required a careful balance between minimizing server requests per session and achieving high data coverage without introducing excessive latency. In practice, this meant prioritizing critical data fields, tuning request frequency, and optimizing parsing logic to extract as much information as possible per request. Several sites had data deeply nested within HTML structures, requiring custom parsing logic and memory-efficient extraction methods. Additionally, strict confidentiality and operational security standards had to be maintained throughout the project lifecycle, in line with Kroll’s internal compliance and governance procedures.

Before development began, DevsData LLC worked with Kroll’s analytical and compliance teams to define data use cases, reporting dependencies, update frequency, and confidentiality constraints tied to live operational workflows. This pre-project phase included source mapping, field-level data definition, volume estimation, and validation criteria used in Kroll’s internal risk, compliance, and research systems. These requirements formed the baseline for scraper design, data normalization rules, and deployment architecture.

To meet these requirements, DevsData LLC developed a modular scraping architecture composed of separate components for data extraction, request orchestration, proxy and session management, and post-processing. Each scraper was custom-built to handle different site structures, response formats, and security layers while operating under Kroll’s strict data protection protocols.

A dedicated engineering team was assembled for this project, focusing on professionals experienced in large-scale scraping, secure data handling, and working with demanding online sources. This setup allowed the team to contribute to complex project requirements from the first weeks of the engagement.

The team simulated human-like browsing behavior using Selenium WebDriver, which allowed interaction with JavaScript-heavy websites. Premium proxy and VPN networks were used to distribute requests across regions and prevent IP blocking. For sensitive operations, the system relied on low-level HTTP requests with session management to keep resource usage minimal. Automatic captcha solving was implemented for most types, excluding Google reCAPTCHA, which required manual validation.

All scrapers were deployed across multiple Google Cloud instances, enabling parallel data collection and high throughput. Continuous communication between DevsData LLC’s engineers and Kroll’s in-house data specialists helped align extraction logic with analytical goals.

Dedicated review cycles were held to validate field-level accuracy, confirm update frequency, and adjust source priorities as new investigative or compliance needs emerged. Data samples were reviewed against Kroll’s internal reporting standards, and change requests were implemented through controlled release cycles to maintain stability while improving coverage and precision.

Over the course of the engagement, DevsData LLC built and maintained several distinct scraping systems, each supporting specific workflows, such as investigative research, compliance monitoring, transaction analysis, and market intelligence reporting. The team’s experience in complex data engineering projects has also been applied in other DevsData LLC engagements, including the Fastlane and Emurgo case studies, which involved large-scale backend systems, high-volume data processing, and technically demanding integration environments. The sections that follow outline the main systems built as part of this project.

For rapid data extraction, one lightweight system was developed within Kroll’s data program that could be launched within days. One implementation supported an NLP-based analysis workflow used by Kroll for hedge fund research, where financial news was collected, scored, and categorized based on sentiment and relevance.

A dedicated system was built within the same Kroll engagement to process responses related to 300 million SSNs through protected online forms. Ten virtual machines were deployed on Google Cloud, each configured to handle low-level HTTP requests with limited concurrency, allowing traffic to be distributed efficiently while minimizing detection risk.

Another system supported Kroll’s market and media intelligence workflows by collecting data from large public databases such as Filmweb and Wikipedia. Using BeautifulSoup and Scrapy, the scrapers retrieved deeply embedded HTML content and navigated multiple page templates to assemble structured datasets across thousands of entries while keeping request volume and resource usage controlled.

DevsData LLC engineers refined Kroll’s internal scraping tools, optimizing their logic, expanding coverage to additional data sources, and improving performance across confidential datasets. This involved code reviews, testing, and scaling measures to support sustained use across Kroll’s analytical teams.

Do you have IT outsourcing needs?

A separate set of scrapers was developed within Kroll’s research workflows for more than 30 eCommerce websites. These systems gathered structured data on product categories, pricing, and availability while adapting to site-specific protection layers and layout changes.

The partnership enabled Kroll to automate and streamline what had previously been a highly manual, fragmented data collection process. With the new scraping infrastructure, analysts and researchers can access structured datasets updated in real time, significantly reducing the turnaround time for market, risk, pricing, and operational reports.

The automated pipelines replaced manual data gathering with a continuous, scalable system that securely handles high-volume requests. DevsData LLC worked closely with Kroll’s data science and analytics teams to align extraction logic with modeling requirements, define normalization rules, and validate dataset structures before integration into analytical workflows. Data governance controls were implemented to manage access, versioning, and retention policies across confidential datasets.

This collaboration allowed Kroll’s data science teams to focus on interpretation and modeling rather than extraction and cleaning, improving both the speed and quality of their insights.

The engagement with Kroll led to measurable improvements in data accuracy, processing speed, and research efficiency.

DevsData LLC’s scraping infrastructure allowed the client to process more than 300 million confidential records securely and extract data from over 30 websites across finance, media, and retail sectors. The automation framework replaced manual research workflows with continuous data pipelines while maintaining compliance and system stability.

All scrapers were deployed on Google Cloud using multiple virtual instances, enabling parallel data processing and real-time monitoring. Kroll’s analytics teams could now access continuously updated datasets without relying on external vendors or manual input, which improved the precision and timeliness of their market intelligence. The collaboration has since evolved into an ongoing partnership focused on maintenance, refinement, and scaling of data systems as new requirements emerge.

Key project outcomes are summarized below:

| Category | Outcome |

|---|---|

| Confidential records processed | Over 300 million |

| Websites and sources scraped | More than 30 |

| Cloud infrastructure | Google Cloud multi-instance deployment |

| Data freshness | Real-time updates |

| Project duration | Ongoing collaboration |

| Key technologies | Python (Requests, Selenium, Scrapy, BeautifulSoup), VPN rotation, Cloud task scheduling |

If your organization depends on data-driven insights, accuracy and scalability are essential. DevsData LLC helps global companies like Kroll transform complex, protected online sources into structured datasets ready for analysis.

Our engineers specialize in secure, large-scale web scraping, data engineering, and automation for industries where precision and confidentiality are critical. Whether you need to build a new data pipeline or enhance an existing one, we can design a solution tailored to your operational goals.

To discuss your project, contact us at general@devsdata.com or visit www.devsdata.com.

DevsData – your premium technology partner

DevsData is a boutique tech recruitment and software agency. Develop your software project with veteran engineers or scale up an in-house tech team of developers with relevant industry experience.

Free consultation with a software expert

🎧 Schedule a meeting

FEATURED IN

DevsData LLC is truly exceptional – their backend developers are some of the best I’ve ever worked with.”

Nicholas Johnson

Mentor at YC, serial entrepreneur

Categories: Big data, data analytics | Software and technology | IT recruitment blog | IT in Poland | Content hub (blog)